Next-level spatial intelligence

Our foundational technology combines state-of-the-art visual SLAM, sensor fusion, and advanced AI for real-time positioning, perception, and mapping.

The power of Slamcore

Under the hood

Position

Where am I?For robots to make better decisions as they move through space, they need to know where they are.

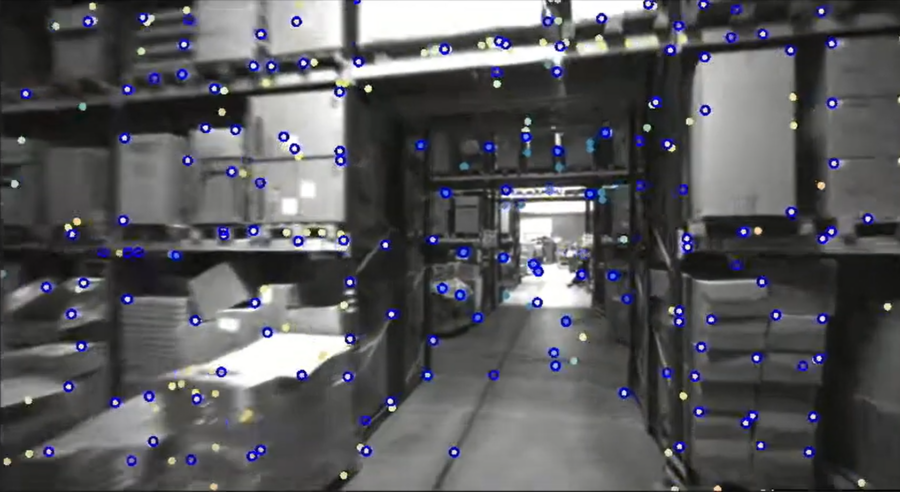

Our visual-inertial SLAM software processes images from a stereo camera, detecting notable features in the environment, which are used to understand where the camera is in space.

Those features are saved to a 3D sparse map which can be used to relocalize over multiple sessions or share with other vision-based products in the same space.

Drift in the position estimate is constantly accounted for by comparing all previous measurements to the live view and triggering corrections as locations are revisited, a process known as loop closure.

Our robust feature detectors, along with intelligent AI models used in our SLAM pipeline, ensure accurate positioning even in challenging, dynamic, and changing environments.

Perceive

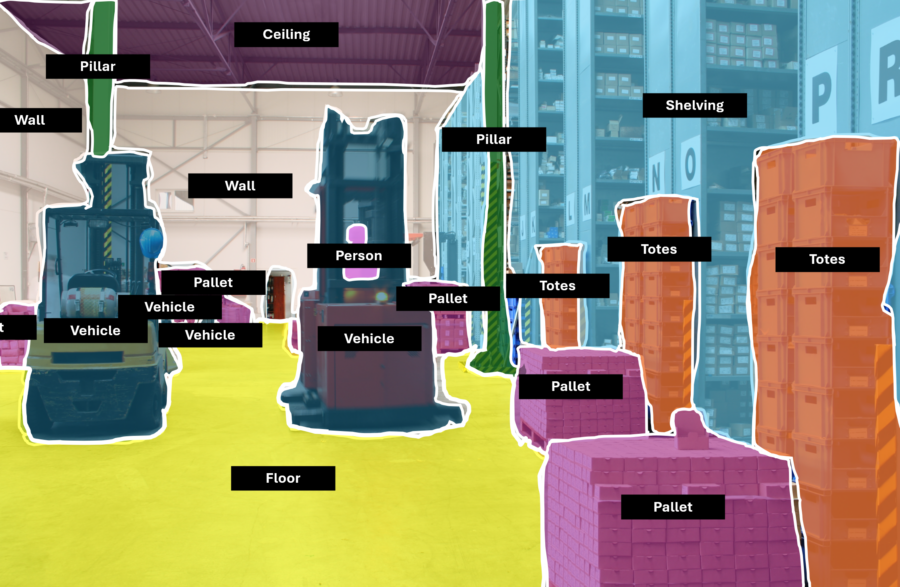

What are the objects around me?Object detection and semantic segmentation are integrated directly into our positioning pipeline and give your robot a sense of what it’s actually seeing.

This information can be used to improve position estimates by ignoring measurements against dynamic objects or to enhance obstacle detection by enabling different navigation behaviors depending on the detected obstacle class.