2023 UPDATE: Sorry, Slamcore Labs has now been discontinued. Please check out the home page for all the latest news.

We designed our Spatial Intelligence SDK so that robot developers can quickly and easily add location and mapping functions to their designs. Downloadable and implemented with a few clicks, the SDK provides robust location and 2.5D maps for any robot using Intel® RealSense™ Depth Cameras and Nvidia Jetson chipsets. Designers can quickly move concepts from the lab to the real world with successful SLAM that can cope with a wide range of environments.

However, the data we process with our SDK can also be used to build richer and more complex maps that can support a wide range of additional SLAM functions including depth completion, 3D mapping and semantic labelling. We are launching Slamcore Labs to give developers a sneak peek of the next generation features we are building today.

Critically, this interactive showcase is more than just a collection of video demonstrations. Anyone using our Slamcore SDK can record, then upload their own data and ask us to apply these new features to data captured in their environment. We will send back mesh representations, occupancy maps or video demonstrations of these new features using their data. Although these advanced features are not yet available in the standard SDK, for those who just can’t wait, we can work with customers on dedicated projects to deliver fully functioning versions based on their specific hardware, software and use-case specifications.

Slamcore Labs is currently showcasing the following next generation capabilities.

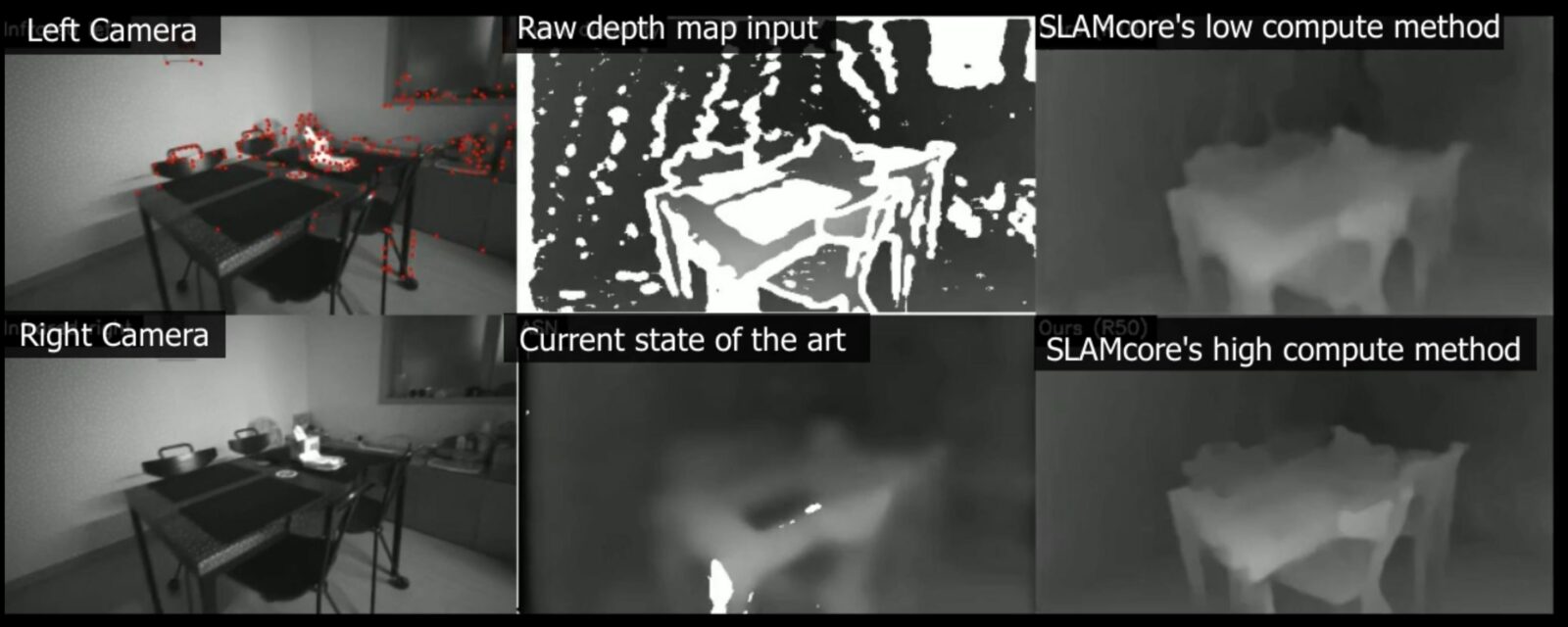

AI Enhanced Depth Maps

Using a dedicated neural network running on a GPU at the edge, Slamcore’s algorithms combine the raw infrared images, depth map and IMU data from Intel® RealSense™ depth cameras to provide a more accurate and smoother disparity or depth map. This is critical for obstacle detection and high quality mapping. To be useful, it must operate in real-time and on board the robot (rather than relying on cloud processing). The examples and simulations we can share in the Slamcore Labs currently run on a laptop, but the algorithms are portable and are designed to run on the robot itself. The Slamcore Labs site shows the enhancements that its algorithms provide over the raw data and the next best commercial solution. By uploading their own data, customers can see how their own designs are better able to detect objects using these new algorithms.

Intelligent Position

By placing a neural network in front of raw sparse map point-clouds, Slamcore can create better, faster and more resource efficient position estimations. The AI selectively chooses which features are best for positioning. It identifies those that are static and dynamic and rejects the latter before they are added to the map. The main benefit is in positioning in changing environments ensuring that dynamic or movable objects are not used for position estimation. This increases accuracy whilst reducing processor demands.

Slamcore SDK customers can send their own data which we will process in Slamcore Labs and return a sparse map with specific objects labelled or removed.

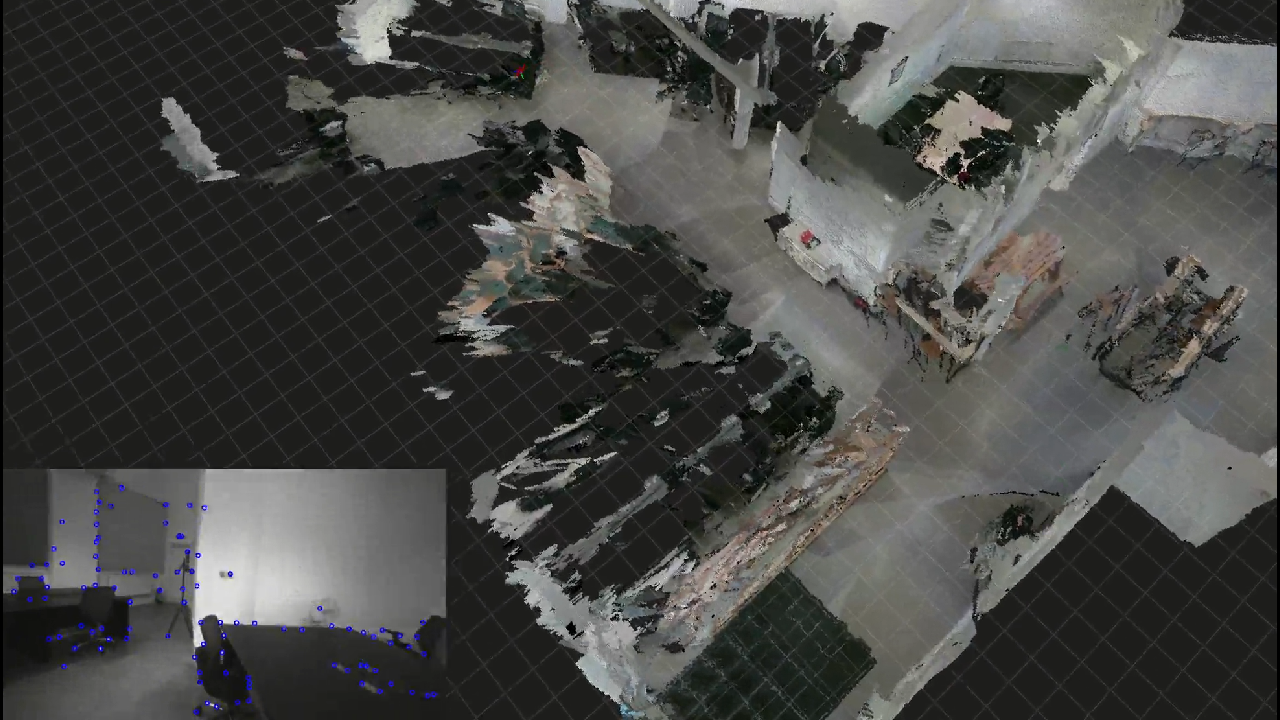

3D RGB Rendered Maps

Customers can already build cm-accurate 2.5D maps using the Slamcore SDK. These add height to ‘flat’ 2D maps. Now, using the same data, Slamcore Labs will create full 3D maps with RGB rendering. As well as providing detail and texture that realistically replicate the real-environment around the robot, they allow precise calculation of occupied and free space in 3 dimensions. Customers uploading data from the SDK will receive a machine-readable mesh file that can be implemented on their platform.

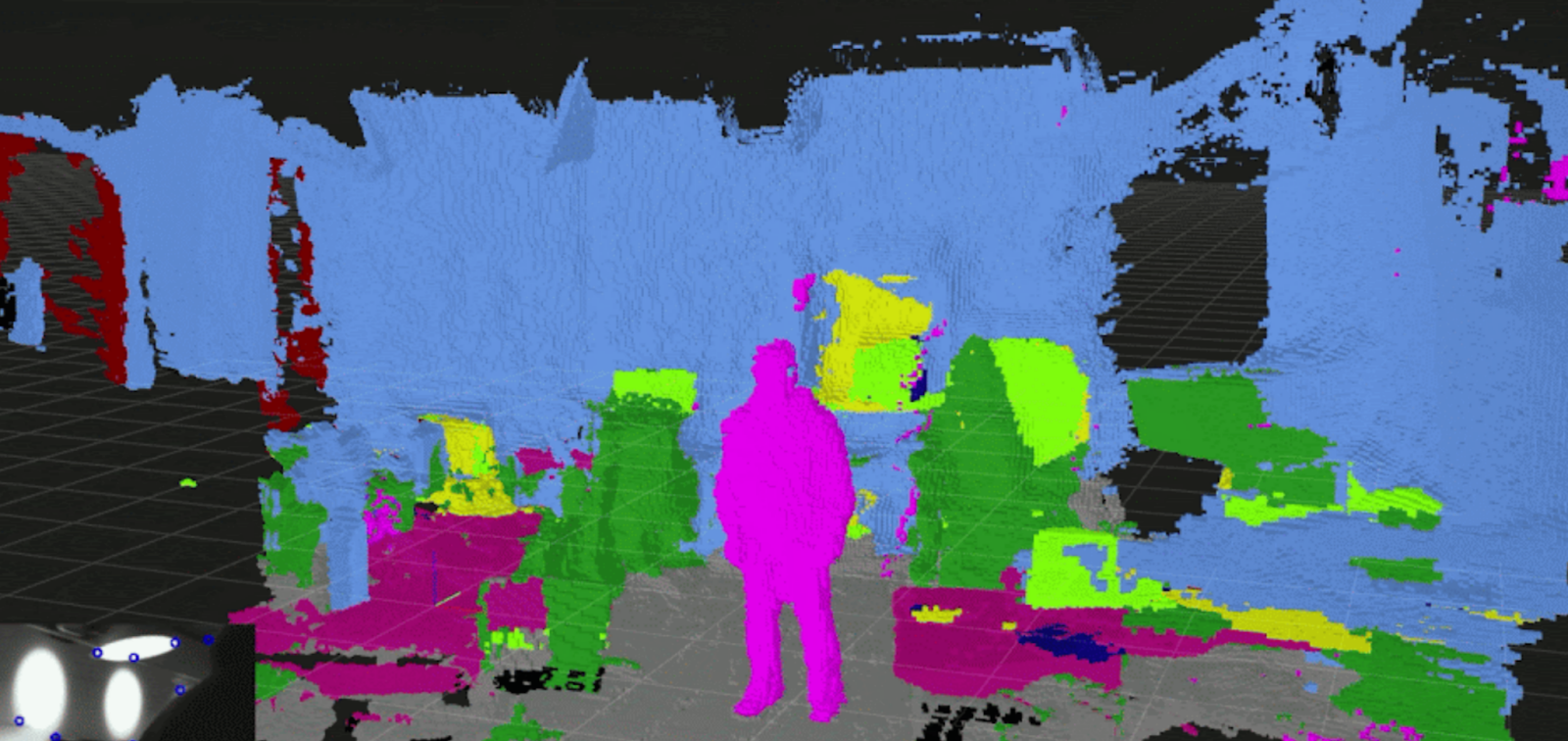

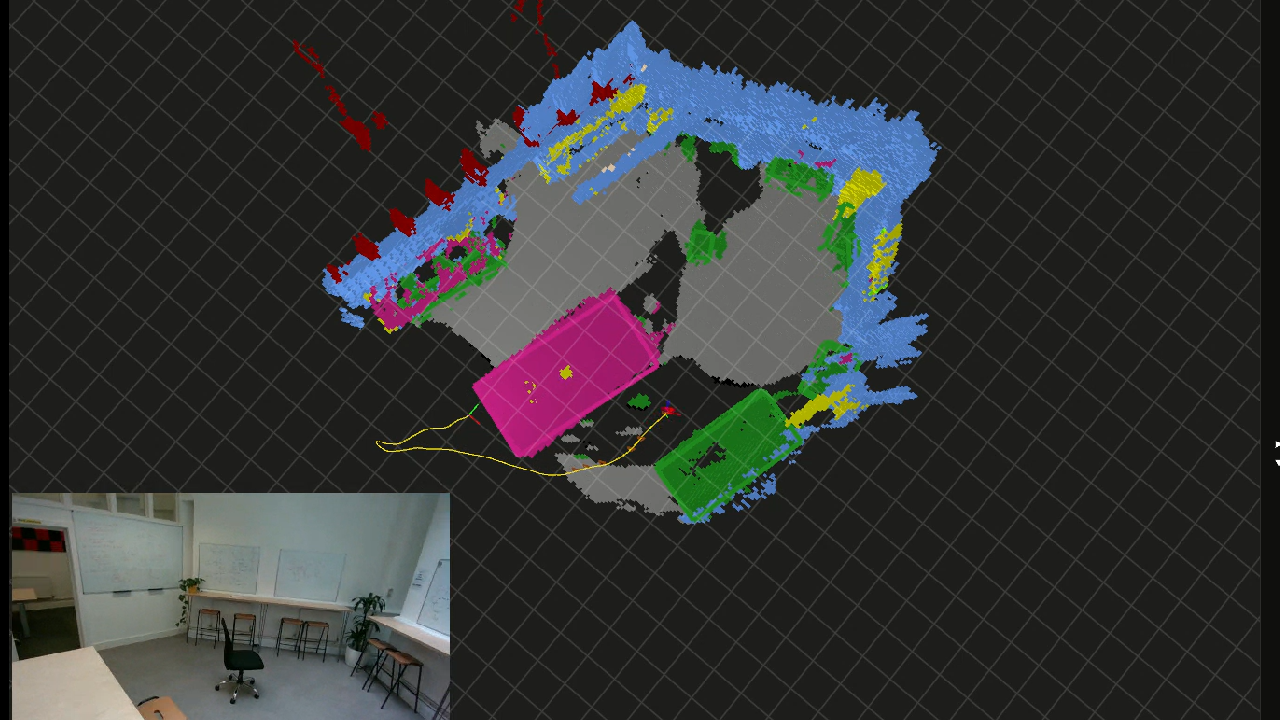

Semantic 3D Mapping

We also have the capability to identify and label all the different things in a map. Using our semantic recognition algorithms an entire map can be colour coded to identify the different surfaces and objects in the map. Walls, floors, tables, people etc can all be colour coded for easy recognition. Fully deployed, developers will be able to automatically remove specific types of object from the map again simplifying processing and making positioning within the map faster, more processor efficient and more accurate.

Semantic 3D Mapping with Instance Segmentation

Once maps have been semantically labelled, this next generation feature counts individual instances of each semantic object. So rather than knowing that the map has ‘chairs’ in it, it can define 3 chairs and treat them as individual objects. This level of sophistication has many real-world benefits as our visual inertial SLAM system passes individual objects to other parts of the control stack where they can support wide ranging functions.

New capabilities are being added all the time as Slamcore engineers innovate and expand the power of the Slamcore algorithms. Customers can test all these emerging solutions with their own data. From the Slamcore Labs page they simply upload data sets and specify which features they want to explore. Our engineers will then apply these algorithms to their data and send back either mesh maps or dedicated videos showing the resulting 3D maps of their data.

Those wanting to embark on more complex demonstrations or dedicated projects that leverage these innovations should get in touch via the Slamcore Labs page.

At Slamcore we are focused on democratizing access to fast, accurate and robust SLAM that is viable at commercial scope and scale. Our SDK provides an effective solution for many as they make the journey from lab to real-world deployment. But there are many other aspects and opportunities for spatial intelligence to add value to robots. Slamcore Labs provides a new route to explore emerging possibilities and to experiment with leading-edge features. We hope that many of our customers and the wider robotics community will participate and interact with our latest innovations in the Slamcore Labs.